#Adversarial Mindset·#Be The Adversary·#Problem-Solving·#Critical Thinking

Adversarial Mindset

The phrase “think like an attacker” has been abused to death.

It shows up in vendor decks, LinkedIn posts, threat modeling workshops, detection strategy docs, AI security conversations, and every other place where security language goes to become wallpaper. Everyone wants to say they have an adversary mindset. Very few people want to pay the cost of actually having one.

Because the adversary mindset is not a slogan. It is not a workshop exercise. It is not asking a chatbot for “10 ways this system could be attacked” and pasting the answer into a risk register.

It is a way of seeing systems.

And once you develop it, you do not really get to turn it off.

You walk through an airport and mentally map physical access controls. You review a cloud architecture diagram and see privilege escalation paths before you see the intended data flow. You sit through a vendor demo and spend the whole time thinking about the trust model, the identity boundary, the logs they probably do not expose, and what happens if their admin plane gets compromised.

This is what adversarial thinking actually demands.

It is useful. It is powerful. It is one of the few thinking models that consistently exposes the gap between how systems are supposed to work and how they can be abused.

But it is also uncomfortable. It creates cognitive load. It creates friction with teams. It can isolate you. And when it is misapplied, it turns into performative contrarianism: the security version of “I found a flaw, therefore I am the smartest person in the room.”

Now add AI to the picture.

AI tools make it easier than ever to generate attack ideas, abuse cases, phishing pretexts, payload variations, recon paths, and threat model outputs. That is not theoretical. It is already happening. The barrier to entry is lower, the speed of experimentation is higher, and more people can now do things that used to require deeper technical experience.

Some of that is good. More people can learn. More people can practice. More people can ask better questions.

But there is a dangerous side too: the world is about to be flooded with confident, AI-generated attack paths that sound smart but ignore context, attacker economics, operational reality, and business impact.

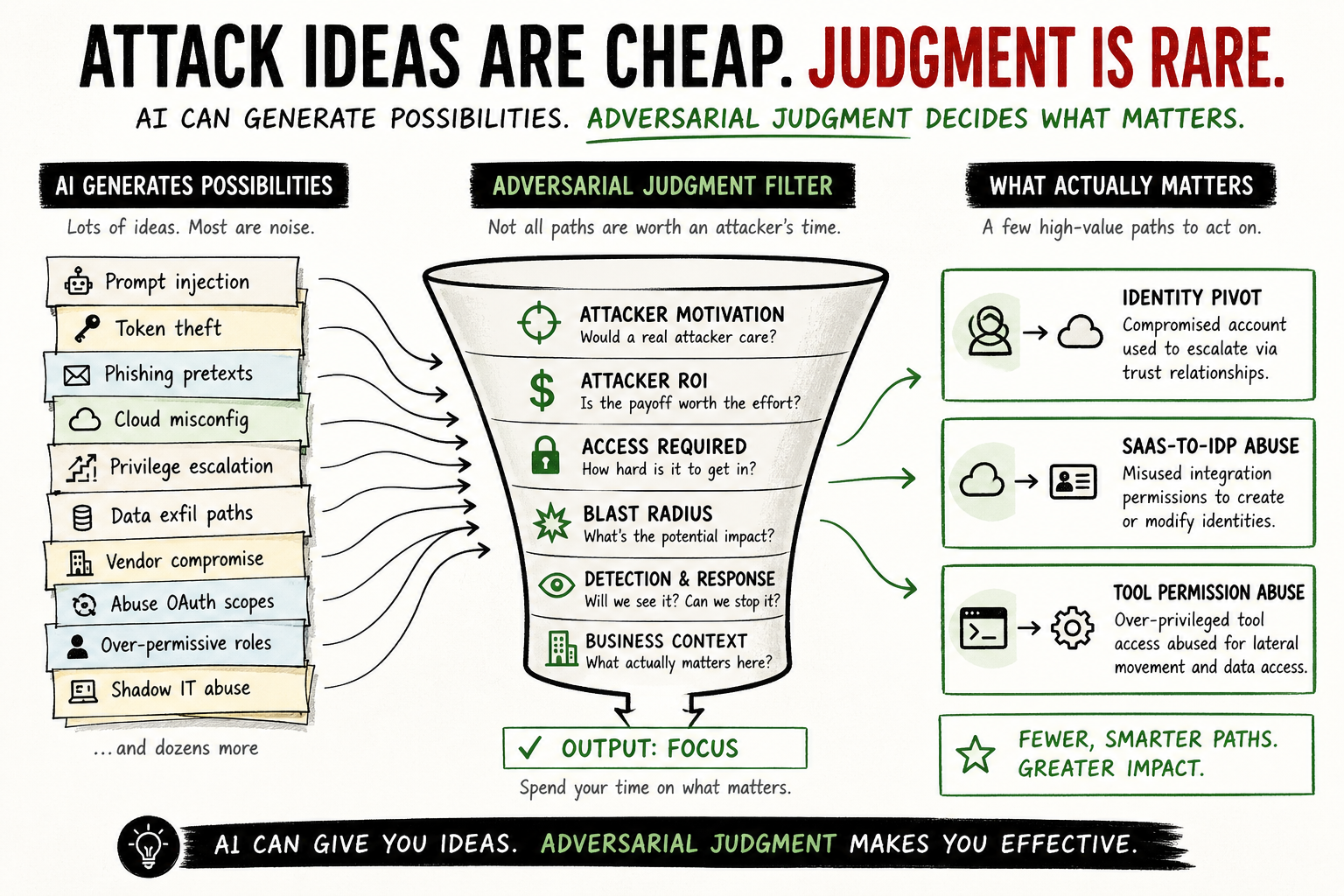

AI made attack ideas cheap.

Adversarial judgment is still rare.

That is the part people keep missing.

The Adversary Mindset Is Not “Finding Problems”#

A lot of people confuse adversarial thinking with problem finding.

They look at a design, find something wrong, and call that “thinking like an attacker.” But finding problems is easy. Finding meaningful problems is the hard part.

Real adversarial thinking is not about asking, “Can this break?”

Everything can break.

The better questions are:

- Would a real attacker care?

- What would they do first?

- What would they do next?

- What trust assumption are we making that they would not respect?

- What identity, token, permission, integration, or operational shortcut turns this from a bug into an attack path?

- What would make this worth the attacker’s time?

- What would we actually see if it happened?

That is where the mindset becomes useful.

Not in the theatrical “everything is vulnerable” sense. In the practical sense. The kind that helps you understand which paths matter, which ones are noise, and which ones are quietly sitting there waiting to become incident evidence six months from now.

This is especially important now, because AI can generate plausible-sounding possibilities all day long. It can produce lists of risks, attack trees, test plans, payload ideas, and detection concepts. Some of them will be useful. Many of them will be garbage dressed in confident language.

The value is not in generating more possibilities.

The value is in knowing which possibilities deserve attention.

Cost One: You Never Stop Seeing Attack Paths#

The first cost is mental.

Once you internalize adversarial thinking, you start seeing attack paths everywhere. Not just in the systems you are paid to analyze, but in everything.

A colleague describes a new internal tool. You are not just listening to the feature set. You are mapping the authentication flow, wondering where the permissions live, what happens when a session expires, whether the audit trail includes failed actions, and who owns the service account behind it.

Your company rolls out a new HR platform. Everyone else sees convenience. You see identity data, payroll adjacency, SSO dependency, admin roles, lifecycle automation, and the blast radius of a compromised vendor integration.

A friend shows you a smart home setup. They are excited about automation. You are thinking about IoT segmentation, default credentials, mobile app permissions, cloud dependencies, and whether their “separate network” is real isolation or just decorative VLANing.

A team gets excited about an internal AI agent that can query data, summarize tickets, open pull requests, and call tools. Everyone else sees productivity. You see delegated authority, prompt injection, tool abuse, data exfiltration, confused deputy problems, and the uncomfortable question nobody wants to ask: “What can this thing do if someone convinces it to behave badly?”

This is not paranoia.

It is pattern recognition.

The problem is that pattern recognition does not clock out when the workday ends. You keep running the same background process:

How would I break this? What does the attacker see that the builder does not? What assumption makes this whole thing fragile?

For some people, that is energizing. For most, it is exhausting.

The adversary mindset gives you a sharper lens, but that lens has a cost. You are constantly translating normal systems into abuse cases. You are constantly noticing gaps that other people do not see. You are constantly holding possible futures in your head.

That load adds up.

The only practical way to manage it is to externalize it.

Write the attack paths down. Draw the trust boundaries. Document the assumptions. Turn the mental loop into something visible, reviewable, and disposable. If it stays only in your head, it becomes anxiety. If you put it on paper, it becomes analysis.

That distinction matters.

Cost Two: You Become Friction#

The second cost is social.

When you think like an attacker, you create friction. There is no polite way around it.

A product team wants to ship. They have thought through the happy path, the user experience, the edge cases, and the launch plan. You are asking what happens when someone abuses the invitation flow, chains a low-privilege role into a higher one, or uses a legitimate feature in a way the product team never intended.

An engineering team wants to deploy a new service. They have thought about scale, observability, uptime, and failure modes. You are asking what happens if the service account is compromised, whether it can read secrets it does not need, whether the logs capture the right actions, and whether lateral movement is possible from there.

A business team wants to integrate with a third-party SaaS vendor. They have thought about cost, ROI, onboarding, and procurement. You are asking what happens if the vendor gets compromised, what the integration can modify, whether it can create users or change groups, how long the tokens live, and whether anyone would even notice abuse through that path.

You are not trying to be difficult.

But you are difficult.

That is the part security people sometimes do not want to admit. Even when you are right, you are introducing cost into someone else’s plan. You are asking them to slow down, rethink, redesign, add controls, change assumptions, or accept risk explicitly instead of accidentally.

To them, it can feel like negativity.

To you, it is threat modeling.

Both can be true.

The problem is not that adversarial thinkers are “the no people.” The problem is that they are usually operating on a different timeline.

Builders are thinking about launch.

Leadership is thinking about delivery.

Attackers are thinking about abuse.

You are thinking about what happens later, when today’s trust assumption becomes tomorrow’s incident report.

That mismatch creates friction.

The way out is not to make the adversarial thinking softer. It is to make it more useful.

Do not just say, “This is insecure.”

Say:

“Here is the abuse path. Here is why an attacker would care. Here is the likely blast radius. Here is what we can change without killing the project.”

That last part matters.

If all you bring is objections, teams will route around you. If you bring a realistic attack path and a practical alternative, you become useful friction instead of organizational sandpaper.

That is the job.

Cost Three: You Are Often Right Too Early#

The third cost is harder to explain until you experience it.

It is not just isolation. It is being right too early.

You sit in a design review and everyone is talking about the intended use case. You are the only one modeling the abuse case.

You sit in an incident review and everyone is asking what broke. You are asking what an attacker could have done while monitoring was degraded, credentials were exposed, or teams were distracted.

You sit in a vendor evaluation and everyone is comparing features and pricing. You are asking what happens if the vendor becomes the attacker’s path into your environment.

This can make you feel like you are speaking a different language.

And the worst part is that when the thing you warned about actually happens, it rarely feels satisfying.

Nobody says, “Great call, you saw this coming.”

They ask, “Why was this not fixed earlier?”

And you remember the meeting. The document. The comment. The risk that was accepted without really being understood. The attack path that sounded too theoretical until it became painfully concrete.

That is one of the emotional costs of adversarial thinking: you see some problems before the organization is ready to care about them.

This does not mean you should become bitter. It also does not mean you should turn every risk into a crusade.

It means you need a system.

Document what you see. Capture the assumptions. Rank the risk. Make the tradeoff visible. Then let leadership make leadership decisions.

Your job is to model threats accurately and communicate them clearly.

Your job is not to carry every ignored risk around like a personal failure.

That way lies burnout.

Cost Four: The Mindset Gets Misused#

The adversary mindset has another cost: people misuse it constantly.

Some practitioners confuse it with being contrarian.

They poke holes in every idea. They reject every proposal. They perform skepticism as if skepticism itself is expertise. They become the person who can always explain why something might fail, but rarely help anyone make it better.

That is not adversarial thinking.

That is ego with a threat model.

Other practitioners confuse adversarial thinking with assuming the worst possible scenario every time.

They model threats that are technically possible but operationally irrelevant. Nation-state supply chain operations against systems no nation-state cares about. Exotic physical attacks against remote-first SaaS companies. Multi-stage attack chains that require more effort than the target is worth.

That is not adversarial thinking.

That is threat modeling without attacker economics.

And now AI makes this easier to scale.

Ask an AI tool for attack paths and it will give you attack paths. Ask for risks and it will give you risks. Ask for scary scenarios and it will happily produce a buffet of security anxiety.

But the hard question remains:

Would a real attacker bother?

That question is where a lot of generated output falls apart.

Real attackers have constraints. They optimize for access, speed, stealth, repeatability, and return on effort. They do not take the hardest path because it looks cool in a diagram. They take the path that works.

Real adversarial thinking understands that.

It does not ignore context. It depends on context.

The same issue can be critical in one environment and barely interesting in another. The same permission can be harmless in one architecture and a privilege escalation path in another. The same SaaS integration can be routine plumbing or a direct line into your identity layer.

If your adversarial thinking does not account for business impact, attacker motivation, operational reality, and actual exposure, it is not adversarial thinking.

It is just noise.

And noisy adversarial thinking is worse than useless, because it teaches teams to stop listening.

A Simple Example: The “Boring” SaaS Integration#

Take a boring example.

A team wants to connect a new SaaS platform to the company’s identity provider. On paper, this looks routine: SSO, SCIM provisioning, a few admin roles, standard vendor integration, procurement approval, onboarding plan.

Most of the organization sees a normal business system.

The adversarial view sees a new trust path.

What can the integration read?

What can it modify?

Can it create users?

Can it update groups?

Can it assign roles?

Are tokens long-lived?

Who can approve the integration?

Where are admin actions logged?

Are those logs monitored by the security team, or do they live in a vendor console nobody checks?

What happens if the SaaS admin is compromised?

Can that become an identity problem?

This is the kind of thing that makes people roll their eyes during implementation. It sounds like overthinking. It sounds like security slowing things down.

But after enough real incidents, you learn that “boring integration” is often just another way of saying “new trust assumption nobody modeled.”

That is the adversary mindset in practice.

Not dramatic. Not cinematic. Not a hoodie-in-a-dark-room fantasy.

Just asking what the system can be made to do by someone who does not care how it was intended to work.

How to Keep the Value Without Becoming the Problem#

The adversary mindset is valuable, but only if you can operationalize it without turning yourself into noise.

Here is the practical version.

Use AI to expand hypotheses, not decide priorities#

AI is useful for breadth. Use it to generate angles you may not have considered. Ask it for abuse cases, edge cases, alternative paths, attacker workflows, and detection ideas.

But do not outsource judgment.

AI can help you create a wider map. It cannot reliably tell you which path matters most in your environment. That requires context: architecture, identity model, business impact, telemetry, threat actors, operational constraints, and plain old experience.

Use AI as a sparring partner, not as the security brain.

Rank attack paths by attacker ROI#

A technically possible attack is not automatically important.

Ask whether the path gives the attacker something useful. Access. Persistence. Privilege. Data. Lateral movement. Stealth. Repeatability. Scale.

If the answer is no, maybe it does not deserve the oxygen you are giving it.

This is where a lot of performative threat modeling fails. It treats possibility as priority.

Attackers do not work that way.

Neither should you.

Pair every objection with a safer path#

If you tell a team “no,” you have created a problem for them.

Sometimes that is necessary. Some designs are genuinely dangerous. But most of the time, your value is not in blocking. It is in redirecting.

Do not only show the abuse path. Show the safer path.

Reduce permissions. Shorten token lifetime. Add approval boundaries. Improve logging. Separate roles. Add detection. Change defaults. Remove unnecessary access. Break the chain.

The best adversarial thinkers are not the ones who find the most flaws.

They are the ones who help teams remove the flaws that matter.

Document assumptions, not just findings#

A finding says, “This is risky.”

An assumption says, “This is only safe if X remains true.”

That is much more useful.

Most serious failures come from assumptions that were once true, partially true, or never actually validated. The vendor cannot modify privileged groups. The token is short-lived. The logs are monitored. The admin role is limited. The AI agent can only access approved tools. The service account cannot reach production data.

Write those assumptions down.

Then test them.

Build detections around what the attacker does next#

This matters especially for defenders and detection engineers.

Adversarial thinking changes how you write detection logic. You stop only asking, “What event indicates something bad happened?” and start asking, “What would the attacker do next if this worked?”

That shift is huge.

A weak detection catches isolated symptoms.

A better detection understands behavior.

A stronger detection understands progression: access, discovery, privilege escalation, lateral movement, persistence, collection, exfiltration, impact.

If your detection logic does not model what the attacker is trying to achieve, it is probably just logging with confidence.

Let low-value attack paths die#

Not everything deserves escalation.

This is hard for security people, because finding a valid attack path feels good. But validity is not enough. You need relevance.

Some paths are too expensive. Some require unrealistic preconditions. Some have tiny blast radius. Some are less attractive than five easier options sitting next to them.

Let those go.

Your credibility depends on signal quality.

If you scream about everything, people will tune out the one thing that actually matters.

Why It Still Matters#

The adversary mindset is not free.

It creates mental load. It creates friction. It makes you early to problems other people do not want to see yet. And if you are not careful, it can turn into the kind of performative contrarianism that gives security people a bad name.

But it is still worth it.

Especially now.

AI will make attackers faster. It will make experimentation cheaper. It will help less technical people generate more sophisticated ideas. It will also help defenders move faster, if they know what they are doing.

But AI will not magically create judgment.

It will not understand your environment the way you do. It will not know which trust assumptions are politically convenient but technically fragile. It will not feel the difference between a clever attack path and one a real adversary would actually use. It will not replace the practitioner who can look at a system and see the abuse case hiding inside the intended workflow.

That is still a human skill.

At least for now.

The point is not to become paranoid. The point is to become harder to surprise.

Because when you think like an attacker, you see the threats that matter before they become incidents. You build detections around behavior, not just events. You design systems that survive abuse, not just systems that work on the happy path.

And when compromise happens, because eventually it does, you are not starting from zero.

You have already asked the uncomfortable questions.

You have already mapped the paths.

You have already thought about what comes next.

That is the leverage.

That is why the cost is worth managing.

I do not think I can turn it off anymore.

I am not sure I would want to.